NKN client enables free end to end data transmission in a purely decentralized way. Prior to NKN, if a sender client (a mobile app, for example) wants to send some data to a receiver client, the receiver needs to be publicly accessible, which is not practical for consumer applications, or they have to be both connected to some centralized server/platform, which will introduce additional cost (to build a relay service or pay for the service) and security vulnerability (data exposed to centralized server or 3rd party service). But now with NKN client, both sender and receiver and stay private in any network condition, and they don’t need any centralized server or platform. The data will be routed and delivered in a purely decentralized way, end to end encrypted, and free. This is made possible thanks to the NKN public blockchain.

However, there is no free lunch. Packets sent by NKN client are routed through NKN network, a global overlay network, in order for the generated signature chain to be considered safe for consensus. Typically, a packet path consists of multiple hops in the public Internet, which increases latency and packet loss rate. We have designed and implemented proximity routing and a few other mechanisms that can significantly improve latency and reliability, but it’s still hard to beat direct connection (assuming they could) if sender and receiver are not far away from each other.

Luckily, there is a way to greatly improve both latency and reliability by utilizing NKN’s overlay architecture and consuming more bandwidth. To understand how it works, let’s first review some related parts of the NKN client protocol:

- Each NKN client has a NKN address of the form

identifier.publicKey, where identifier is an arbitrary string. - Each NKN client is connected to a NKN node whose ID is closest to the hash of its NKN address, i.e.

hash(identifier.publicKey).

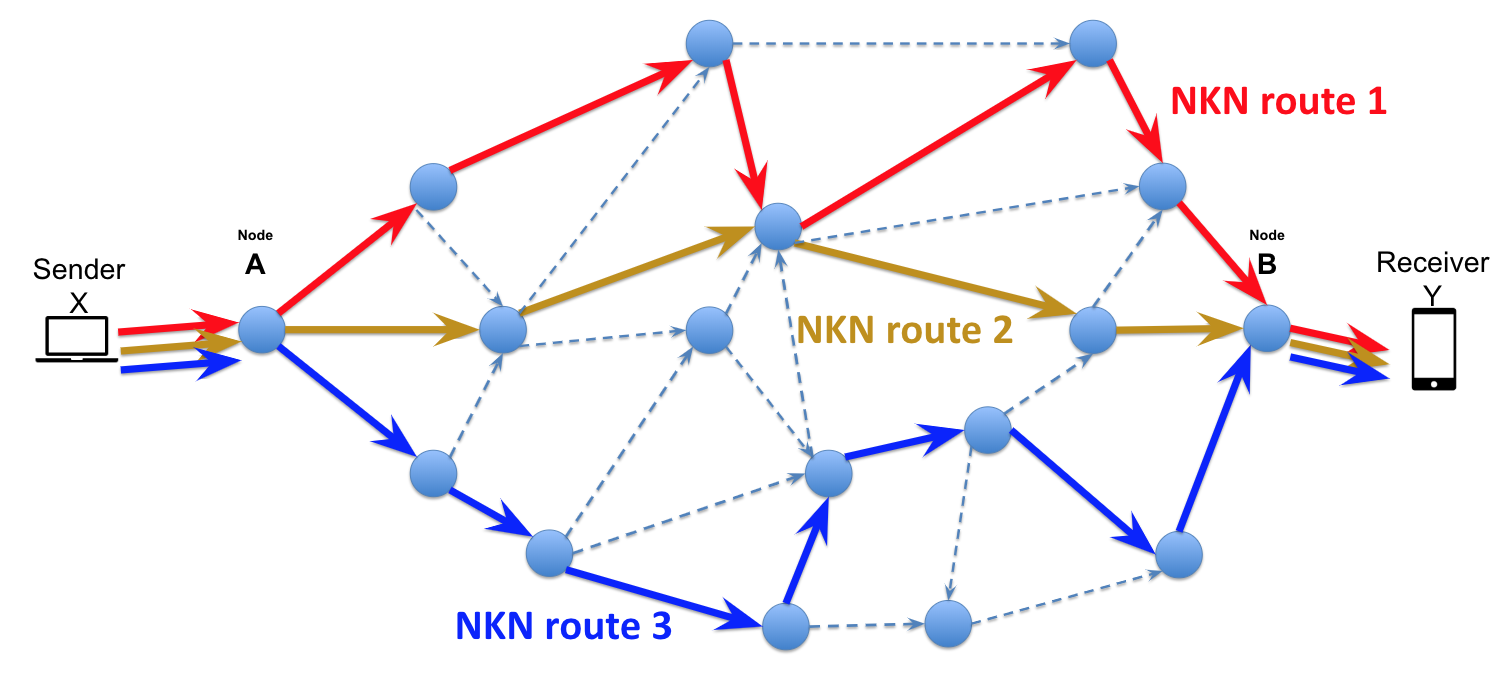

Now we can describe our solution: both sender and receiver can create multiple sub-clients by adding an additional string to the identifier. For example, instead of creating a client nkn.publicKey, we can create X clients, where i-th client’s address is __i__.nkn.publicKey. Now if the sender wants to send a packet to receiver, all its sub-clients will send the same packet concurrently, where the i-th sender client will send the packet to i-th receiver client. Whichever packet arrives firstly will trigger receiver’s onmessage event, and the rest will be ignored.

The above protocol works well in NKN because by adding additional identifier, we are creating multiple independent clients that connect to different, unrelated NKN nodes, so the packet is being sent concurrently in a few independent paths (unless the sender or receiver’s network has a problem). If node on a path has some problem, it won’t affect other paths. Therefore, the packet loss rate decreases exponentially as the concurrency goes up:

p_multiple_loss = p_loss ^ X

and similarly for latency:

p_multiple(latency > t) = p(latency > t) ^ X

To give some more concrete examples, if the single-client packet loss rate is 1% (not the actual data, just an example), and we are creating 3 sub-clients, then the actual packet loss rate will be 0.0001%, i.e. reliability is 99.9999%.

The above protocol has been implemented in JavaScript as nkn-multiclient-js. It is a drop-in replacement of nkn-client-js and can be used in the same way as nkn-client-js.

The improvement in latency and reliability comes with more bandwidth usage as cost, so it’s more suitable for applications that are sensitive to latency or reliability but not throughput. For example, d-chat, an awesome decentralized chat running as Chrome/Firefox extension, uses nkn-multiclient-js to improve message reliability and latency.

A potential improvement to the above protocol is using erasure coding to control latency-throughput tradeoff. Instead of sending the same packet X times using X sub-clients, we can make use of erasure coding and send X different packets using X different sub-clients such that we can decode the original packet once we have K out of X packets. This way one can have lower latency but lower throughput using smaller K/X, or higher throughput but higher latency using larger K/X.

In summary, NKN multi-client SDK gives developer more flexibility in meeting the needs of their applications, and fine-tuning the balance between packet reliability, latency and bandwidth usage.