Background

NCP is a session-based NKN control protocol. It transmits data simultaneously through multiple connections over a global decentralized NKN network. It divides large data into packets (segmentation) and sends them by multi-connections to the destination. It greatly improves the efficiency of data transmission and provides a very good infrastructure for building distributed applications.

NKN node computers and network bandwidth vary widely. Different links have different network throughput. The data sent by different links have different throughput, latency, round trip time, and loss rate. The ideal state of simultaneous multi-channel transmission is that each channel gets an appropriate data transmission task according to its throughput. In the same time window, all connections send data and receive ACKs synchronously. So each connection just does its best, not too much and not too little. In our proposal, we collect the throughput of each channel, count RTT and RTO according to the real time data transmission, and dynamically adjust the session and connection send window to approach the ideal state. This is the design goal of the proposal.

Current Process

Every NCP session has two endpoints. Both endpoints have their default startup configurations, such as connection number, send window size, receive window size, ACK interval, etc. The session endpoints establish their connections through handshake packets. After handshaking, they both agree on the same connection number, send window size, and receive window size.

When a session starts transmitting data, it doesn’t know the throughput, latency of each connection. So all connections have the same window size (size 16). This means that each connection starts with the same load.

After a connection receives a response from the other endpoint, the connection increases its window size by 1 with each ACK. For example, a connection sends 16 packets, after a while it gets 16 ACKs from the receiver, then the connection send window size will increase from 16 to 32. It will allow this connection to send 32 packets. It means that the workload of the connection is doubled. If this connection works very well, its window size will increase very fast and soon reach 256, which is the MaxConnectionWindowSize. After this threshold, it won’t grow any more.

On the other hand, if a connection can’t get all ACKs from the receiver, e.g. it sent 16 packets, but only got 14 ACKs in the timeout threshold, then its window size will change as 16 + 14 = 30, which is increased by 14 ACKs, but halved twice to: 30 / 2 = 15, 15 / 2 = 7, which is due to the timeout of getting ACKs from 2 packets. So the workload of this connection would be 7.

This design works very well in most cases. It increases the window size of the connection when it is sending and receiving smoothly, and it reduces the window size of the connection very quickly when its packets timeout. However, there are perhaps two things that could be improved:

-

First, each connection has a maximum window size of 256, which can’t be larger. This limits the high-throughput connection to transmitting more data.

-

Second, if there are two connections, one connection has high throughput, such as transmitting 1024 packets per second, and another connection has lower throughput, such as 256 packets per second. The latency of both connections is less than the timeout threshold, so there are no packets time out. Our current processing will assign the same workload to both connections. Obviously, the workload doesn’t match their capabilities.

Proposal

The suggestion target is :

-

First, each connection could do its best under certain circumstances. The only limits are CPU, memory of each node. Each connection can try its best in sending and receiving data.

-

Second, each connection could approach its capability under any circumstances, such as startup, exceptional, or disconnect and reconnect.

We propose solution as follows:

- After handshaking and the session is established, we calculate the total window size of the session as:

sendWindowPacketCount = sendWindowSize / sendMtu

At the beginning, we don’t know the capabilities of each connection, so we assign the same window size to each connection:

// n is the number of connections

initialWindowSize = sendWindowPacketCount / n

-

Adjust send window size dynamically

As the current process, the window size of the connection will increase when getting ACKs, and will decrease by half when timeout occurs. But we remove the limitation of MaxConnectionWindowSize. It can increase over 256 to maximum as session.sendWindowPacketCount.

And we still set the minimum window size to 1 as before. It means that a connection will never stop working, it will send a packet and wait for a response continuously.

Another occasion for reducing window size happens when a connection writing packet to the network fails. It means the network has some exception when writing fails, so we also reduce window size by half.

Because each connection adjusts its window size separately, the total window size of all connections may be greater or less then session.sendWindowPacketCount, so after the connection adjusts its window size, the session must make a normalization.

Proof of Concept Test

To test this proposed concept, we developed a component to mock out network connections with different configuration parameters. The mockconn is available at https://github.com/nkn/mockconn . MockConn implements the standard library of the net.Conn interface. And MockConn can mock out different throughput, latency, loss rate, close an endpoint read, close an endpoint write, pause an endpoint read, resume an endpoint read, pause an endpoint write, and resume an endpoint write. It is useful for testing different network states.

After setting up the network simulator environment, we tested this proposed solution and got these results:

-

Transmission speed

The new version increases the transfer speed about 10% to 20%.

Connections Prev Speed(MB/s) Current Speed(MB/s) Test Case 1 0.945 0.985 Base case, one client throughput 1024 packets/s, latency 50ms, loss 0 2 0.976 1.11 Append 1 client throughput 128 packets/s, latency 500ms, loss 0 to base case 3 1.109 1.155 Append 2 client throughput 128 packets/s, latency 500ms, loss 0 to base case 4 1.112 1.358 Append 3 client throughput 128 packets/s, latency 500ms, loss 0 to base case 5 1.265 1.48 Append 4 client throughput 128 packets/s, latency 500ms, loss 0 to base case 2 1.205 1.946 Two clients same throughput 1024 packets/s, low latency 50ms, high latency 500ms 2 1.34 1.798 Two connections with same throughput and different loss: low loss 0.01, high loss 0.1 -

Window size adjusted as a percentage of connection throughput

The total session window size is set after handshaking. Each connection’s window size is adjusted by its received ACKs or timeout.

We have tested several startup situations and exceptional network errors. It shows that the window sizes of the connections always go to match their throughput.

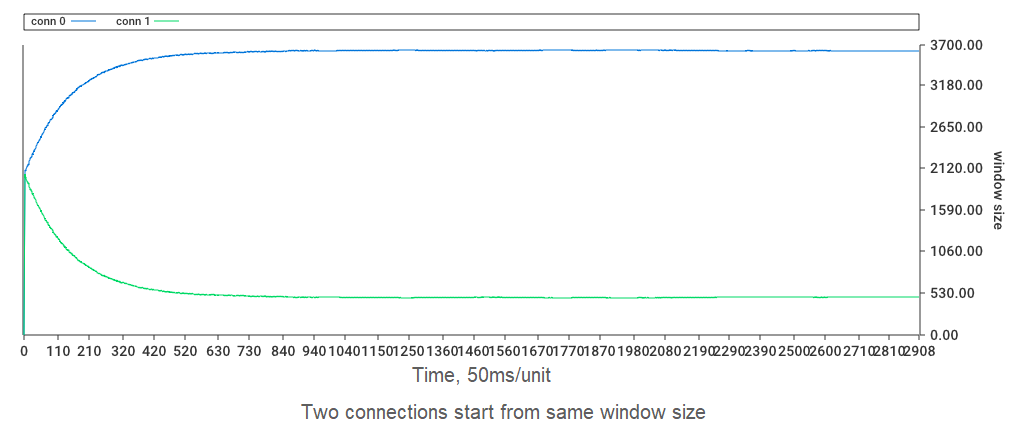

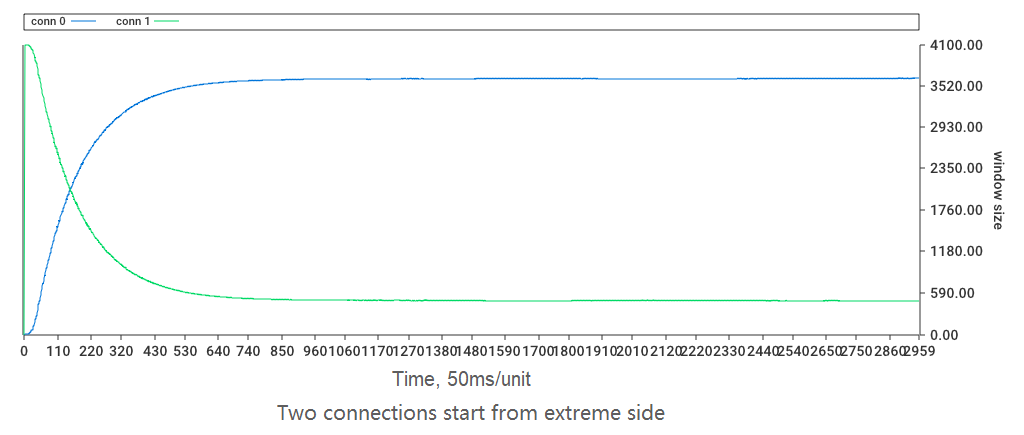

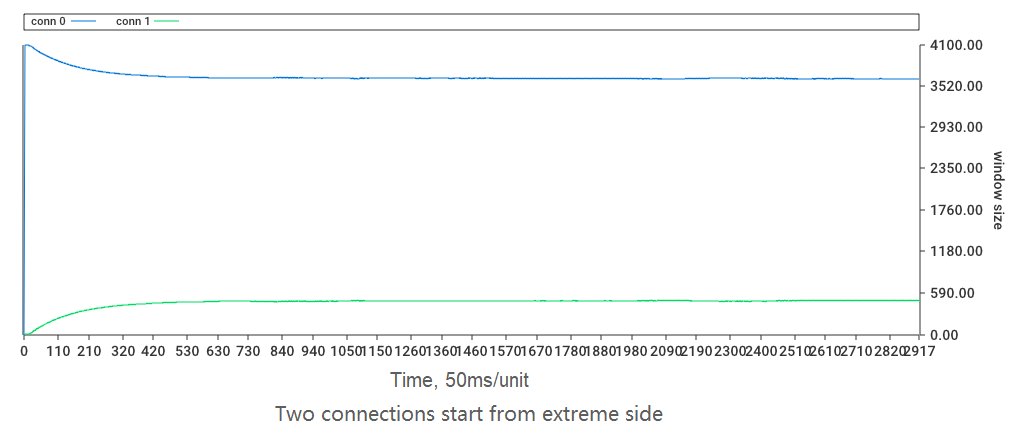

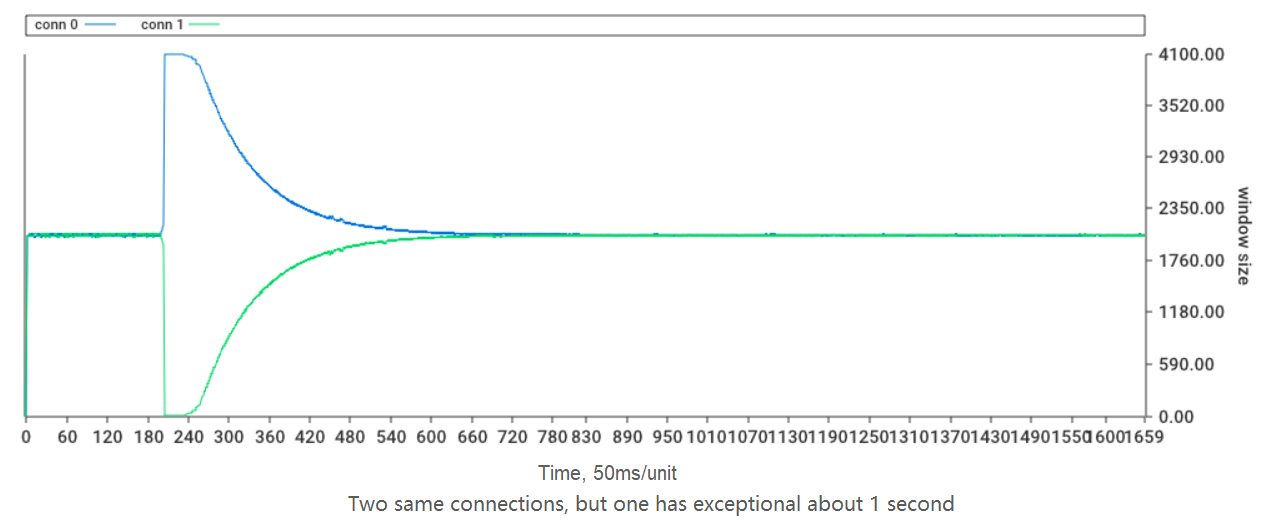

Here we set up two connections, connection one has a high throughput of 1024 packets/second. The other connection, connection two, has a throughput of 128 packets/second.

-

At first, both connections start from the same window size, after receiving ACKs and adjusting the window size, they both approach the ratio of their throughput.

- Second, the low throughput connection starts from a low initial window size, and the high throughput connection starts from a high initial window size, after about 10 seconds, they approach the proportion of their throughput.

- Third, in contrast to the second case, the low throughput connection starts from high initial window size, and the high throughput connection starts from low initial window size, after about 10 seconds, they approach the proportion of their throughput.

- Fourth, we start two connections with the same throughput, after working for a while, we stimulate the network break for the second connection, there is a second that the connection read function is exceptional, and can’t read data. After 1 second it recovered. We can see from the graph that they are approaching the proportions as their throughput.

Details for Discussion

-

The total session window size is set by session.sendWindowSize / session.sendMtu. The default sendWindowSize is 4 megabytes, the default sendMtu is 1024 bytes. Mtu is determined by the network MTU. and sendWindowSize is up to the computer memory. Both are configurable. As computer memory gets bigger and bigger, which default sendWindowSize is better?

-

From our test, we need about 10 seconds to adjust window size approaching the proportion of connections to their throughput. It’s mainly decided by network latency. Do we have a better way to reduce the approach time?

-

We use receiving ACKs to measure connection throughput. Before we get enough ACKs, we don’t know the throughput of the connection well. Is there a good way to quickly measure network throughput?

Feasibility and Implementation Cost

Implementing this proposal is easy and low cost, we just need to modify some codes to adjust the window size of connection according to its throughput.